- 1 - Intro

- 2 - Deploying the rest

Deploying the rest of the platform landing zone

Now that we’ve some code showing infrastructure changes and deploying, lets extend it to include the rest of the core ALZ resources.

You can follow along with the code in the repo. The below is written chronologically but you’ll be looking at the completed version of the code. It should still be understandable. If you want to follow the state of the repo at the time of writing, you can use this commit.

Custom policy definitions

Custom policy definitions are a little messier than the rest: at their core they’re still all in JSON, and they’re imported in from another repo. But we can work with them.

The root of it in ALZ-Bicep is

this

folder.

definitions creates the policy then assignments applies them. I’ve copied across the

module definitions and the folders in lib they depend on (into src/modules), then

customised one of the samples into src/customPolicyDefinitions.bicep in

https://github.com/GSGBen/gsm-platform. As in the

previous

post, I’ve also removed the telemetry from the

module.

Now, we’re not fully

DRY:

parTargetManagementGroupId is written literally, not populated by common variables

that also populate the management group module variables. You might want to hoist this

out (via a single bicep file), change the modules, or use a parameters file. We’ll keep

it for now any maybe revisit it later as we add more.

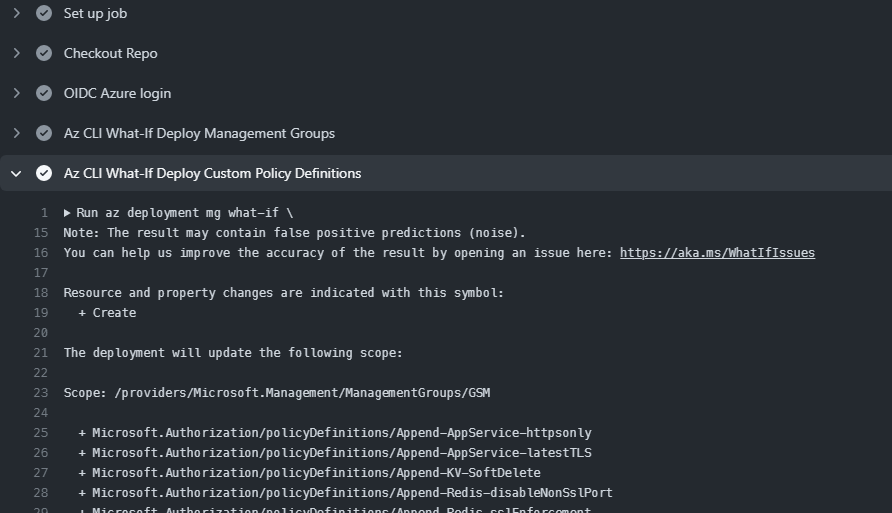

At this point we’ll run a quick what-if to see if the deployment would work. You should see all the new definitions listed.

$inputObject = @{

DeploymentName = 'alz-PolicyDefsDeployment-{0}' -f (-join (Get-Date -Format 'yyyyMMddTHHMMssffffZ')[0..63])

Location = 'Australia East'

ManagementGroupId = 'GSM'

TemplateFile = "src/customPolicyDefinitions.bicep"

}

New-AzManagementGroupDeployment @inputObject -WhatIf -WhatIfResultFormat ResourceIdOnly

Now that it works we can add it to the what-if pipeline. The arm-deploy action

doesn’t support giving us all the output, so I’ll use the az cli one again. It’s looking

like we’ll probably repeat the $GITHUB_OUTPUT code a lot so I’ve extracted it into

pipeline/github_output.sh for a quick fix. In production you could fork and add an

option to the az-deploy action that allows storing all STDOUT.

Note: you have to mark scripts executable before GitHub actions gets a hold of them. On

Windows you can use git: git update-index --chmod=+x pipeline/github_output.sh.

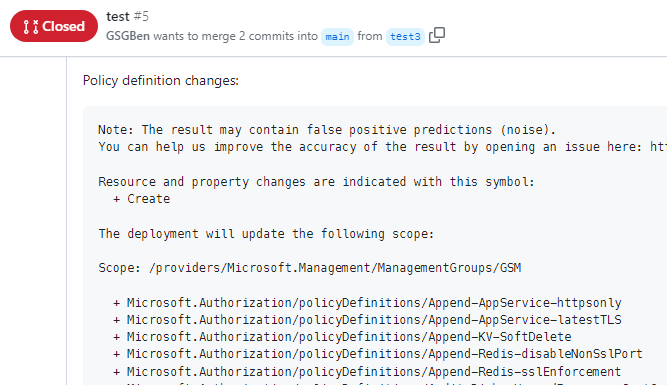

I’ve also updated the add-output-to-comment code to add the output of both steps. At this point the what-if pipeline is working:

Now we can add it to the production deployment. I’ve edited deploy.yml to hoist up the

env variables and added a deployment step for the policy definitions. We’re repeating

the variables - in production you could extract these and share them via GitHub

environment env vars.

Note that at this point the pipeline wouldn’t work in one run - things like the custom policy definitions depend on resources created in previous steps (management groups in this case) and the Bicep validation would fail. I don’t think Bicep supports a solution to this at the moment, for all the different scopes we’re deploying at - a single orchestration file would be better, but there are Bicep limitations. You need to use modules to change the scope (e.g. deploying to a different subscription), but can’t use the output of things created in those modules to set other scopes, because scopes require static values, not runtime-created values! There might be movement on this in the future., but at the moment Terraform, or some imperative deployments at the start of the project, might be a better option if you need everything done in one run. Maybe the fabled Deployment Stacks will fix this? For now we’re going to continue with Bicep.

We don’t assign the policies yet - they require other modules so we continue with the order defined in https://github.com/Azure/ALZ-Bicep/wiki/DeploymentFlow.

Custom role definitions

For the next few deployment modules, things mostly* follow the same pattern and are quicker. Basically:

- Follow the deployment flow order above

- Copy the required module files from the linked folder, and any files they depend on

- Adjust as required (e.g. removing telemetry, adjusting relative paths if you’ve changed them like I have)

- Copy a sample, adjust the paths, adjust the variables

- Test the

what-ifdeployment locally - Create a new step in the

what-ifaction to test the deployment in the pipeline - Create a new step in the

deploymentaction to deploy the component in the pipeline

I’ve deployed Custom Role Definitions like this.

Logging

Almost immediately though, things change again.

The deployment guide for

logging

has some imperative commands to create a resource group then deploy to that. We want to

keep everything as declarative as possible, so I’ve adjusted src/logging.bicep to

create a resource group at a subscription level, then create the logging inside that.

It also has the ID for the required management subscription specified directly (in the GitHub Environment variables). In production you’d create this in bicep and use the output here, but you can only programmatically create subscriptions for the more enterprise offerings. I’m using the standard PAYG billing, so I’m just hard-coding mine. This means that the repo as it stands will take multiple deploys to succeed, and after the first you’ll have to manually create a subscription.

Note: if you create the subscription in the portal and it doesn’t show, click on this and clear the filter:

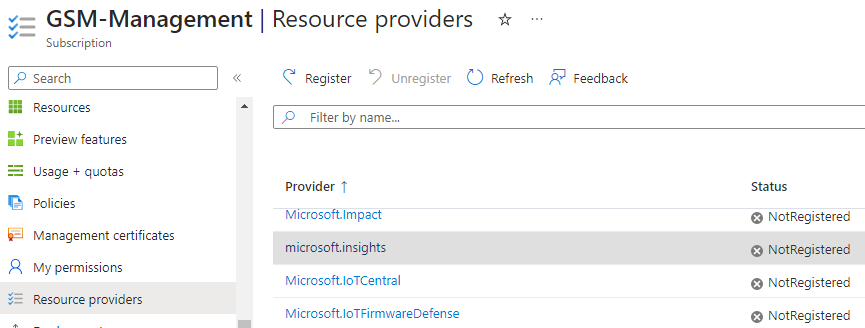

Also, after creating the subscription, enable the Microsoft.insights resource provider

that subsequent deployments require:

We can test this locally more easily using Azure CLI because it allows us to set the

subscription per-deployment. Run az login first though, to update the subscription list.

az deployment sub what-if \

--template-file src/logging.bicep \

--name test_create_logging \

--location "$location" \

--subscription "$MANAGEMENT_SUBSCRIPTION_ID"

Management Groups Diagnostic Settings

Here the cracks start to appear in the Bicep-in-multi-step-pipeline model. To deploy the management group diagnostic settings (MGDS), we need to know the ID of the Log Analytics Workspace. Ideally we’d have a single Bicep file for this entire deployment that would allow easily passing it around, but Bicep’s multiple deployment scopes and restrictions on runtime-generating scope IDs prevents us. Terraform might allow it.

Lets persist. We can get a value from a previous deployment step using outputs. In

logging.bicep we first add an output:

output outLogAnalyticsWorkspaceId string = baseline_logging.outputs.outLogAnalyticsWorkspaceId

Then in deploy.yml we can pull the value. Note that the output is populated even if

the previous step didn’t make any changes. Also note that at the moment az cli doesn’t

automatically remove quotes from parameters.

# get the ID of the workspace deployed in the previous step. have to remove quotes

# because in the next step `--parameters` doesn't automatically do it.

LOG_ANALYTICS_WORKSPACE_ID=$(az deployment sub show \

--subscription "$MANAGEMENT_SUBSCRIPTION_ID" \

--name create_logging-test \

--query properties.outputs.outLogAnalyticsWorkspaceId.value | tr -d '"')

az deployment mg create \

--template-file src/mgDiagSettings.bicep \

--name create_mg_diag-${{ github.run_number }} \

--location "$location" \

--management-group-id "$managementGroupPrefix" \

--parameters parLogAnalyticsWorkspaceResourceId=$LOG_ANALYTICS_WORKSPACE_ID

The remaining issue is with the what-if test deployment. We don’t actually deploy

anything in the previous what-if step (for logging), so we can’t get an output out of

it. The hacky workaround I’ve used is to use a dummy value - it doesn’t actually check

that the referenced ID is valid. This isn’t ideal because it’s not validating as much as

we want, but it’s what we have to do for now.

Hub and Spoke networking

Next we deploy hub networking. This is the central network that all other landing zone spokes will peer to and route through. Again this requires a new subscription (Connectivity) so we manually set that up first (if you have an enterprise agreement, automate this as well).

I’ve copied hubNetworking.bicep and its dependencies into src/modules. In

src/hubNetworking.bicep I create a resource group, then deploy the module inside of it.

I’m not deploying the ones that would incur (in this case, large) costs. In prod you’d be enabling most of these. Azure Firewall for central routing, Express Route or VPN Gateway (or both) for on-prem connectivity.

Custom policy assignments

Policy Assignment is a bit more of a hassle because of its dependent files. I’ve copied

in

alzDefaultPolicyAssignments.bicep

and updated all the lib paths to the gsm-platform structure. I’ve also copied in its

dependent modules (and theirs etc). These are then called by a new

src/customPolicyAssignments.bicep file that wraps

src/modules/alzDefaultPolicyAssignments.bicep and passes in the required parameters.

This page goes over how the

ALZ repo deploys the policies, and how you can add your own. With the design in

gsm-platform you could easily implement option 2 by adding extra calls inside

src/customPolicyAssignments.bicep.

Conclusion and wrap-up

And that’s where we’ll draw the line the for the main platform example. It’s barebones and missing some bits (e.g. no Identity subscription, no Azure AD stuff), but it serves as enough of a starting point to extend.

What do I think of Bicep? For something like this, it’s not the best tool for the job:

- Terraform could probably have this apply from a single top-level file and would let you tear it down easily. Bicep’s limitations around scopes and run-time values are painful.

- Not having a

terraform destroyequivalent is unhelpful.

BUT:

- If you’re planning to use Bicep for other parts (e.g. app landing zones) going forward, it’s probably worth the pain of getting this part created in it as well for standardisation.

- It still doesn’t require storing state.

- Deployment Stacks should hopefully show up (soon-ish?) and remove the remaining pain points.

- It’s overall a great language with a great VSCode integration.

If you’re planning to be Azure-only for the foreseeable future and can deal with the above pain points I think you should go all in on Bicep.

Videogames

Hey, do you like videogames? If so, please check out my game Inventor Simulator on Steam, and consider adding it to your wishlist. Craft inventions and sell them to customers, then automate your workbench, workshop and store! Research new inventions or create your own from scratch. Set prices based on an economic model, unlock and craft upgrades, and vertically integrate your supply chain. Become the world's greatest inventor!See full gameplay on Steam!